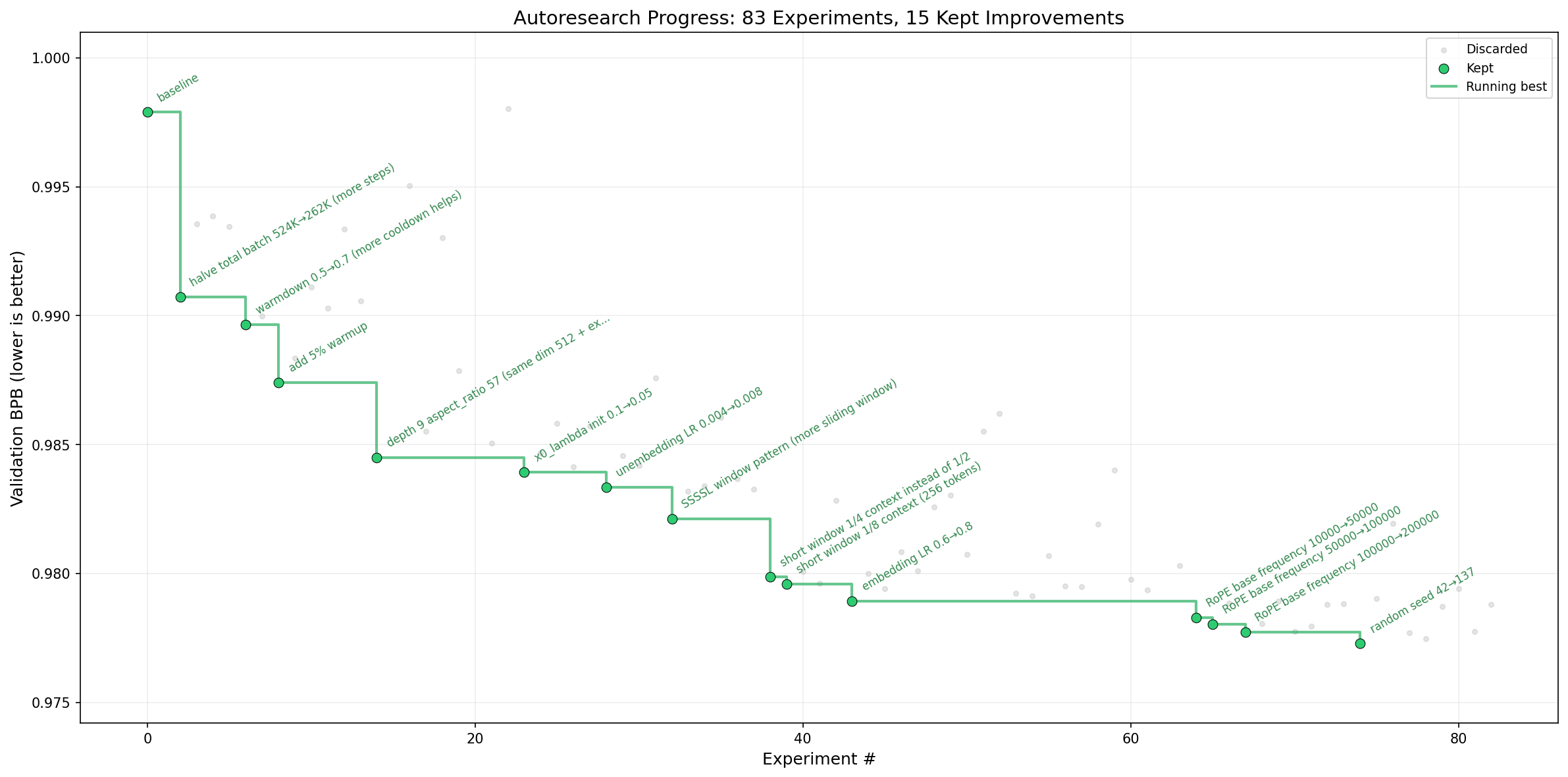

Autonomous AI Research

Give an AI agent a small LLM training setup. Let it experiment overnight. Wake up to a better model. Explore the full source code below.

5 min

Time budget per run

~12/hr

Experiments per hour

~100

Experiments overnight

val_bpb

Single metric (lower = better)